@cpixip in short: what an awesome build! extraordinary work, thank you for showing it.

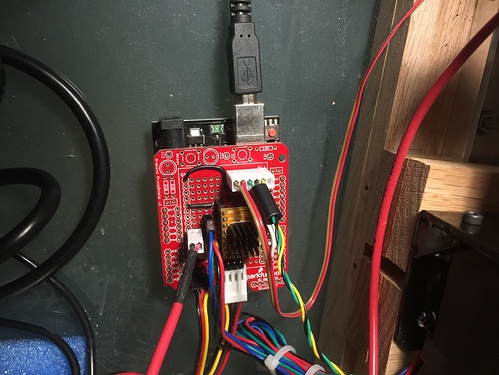

Thank you also for pointing that kit from sparkfun, will look some more.

Regarding the sensor I picked for testing, the reason was I actually was looking for a breakout with something like the ADNS-3080, at a reasonable price for an initial test. The lens base is held by two screws, and if removed, essentially it is the sensor on a board. The lower price is actually because it is a mass product, and I believe it may be already superseded by a new part. This video shows a bit of the view of it testing it as 30x30 camera (linked cued to that part). Knowing that I didn’t care about the light source, it was the perfect breakout for testing.

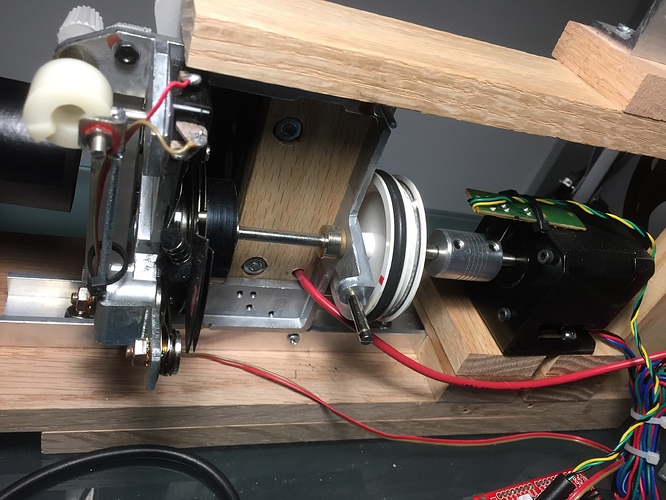

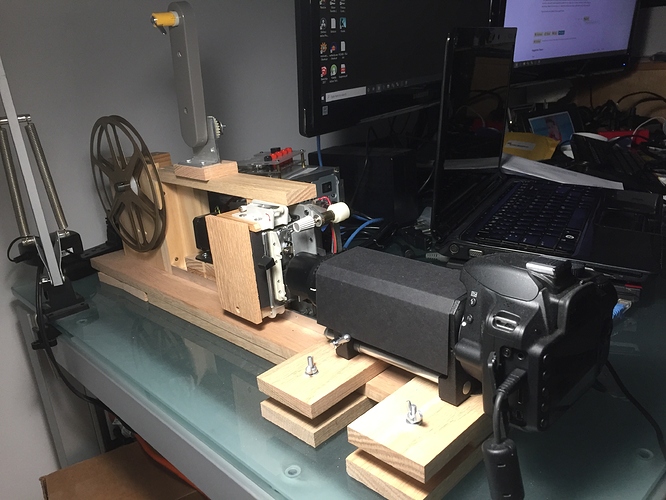

If you have not come across of it, my first build is here, and the second version is an incremental improvement on it, but same block components (better stepper driver, better optic, better below, better camera support).

Regarding your comment:

the fact that the sprocket teeth were substantially smaller than the film sprocket itself. So they were a bad choice for defining the sprocket position precisely. While the film frame stayed in the camera view, it was dancing up and down noticeably from frame to frame. That was mainly due to some slight variations in friction between the film and transport mechanism.

While the results of my second build are noticeably better, I so have a little bit of wobbling on the sprocket holes. This gate work for 8 and Super 8, and the way it is switched is by sliding the gate plate so the sprocket holes align with the claw.

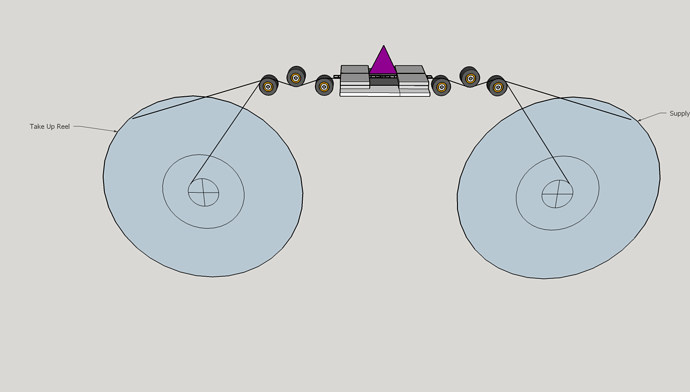

So here I am, starting to building a transport from scratch. My first thought is to move the film without capstan or sprockets… I also know is a tall order, but will give it a try. In my case, I have about the same amount of 8 and super 8, both are the same width, but different height, so there is the added complexity of different frame sizes, different travel distance per frame. Thinking of using the micro-stepping capacity of the stepper driver.

My thinking is that this would only work if the mechanics and sprocket detection is amazing (which given my mechanical limitations is a bit stretch) or… very accurately measuring what is happening with the film displacement and software the problems out.

Here is the first thought on the transport, thinking of one stepper motor per reel.

Still in the brainstorm mode, so all the pointers you have provided are very well taken. Thank you!