… ok, time to wrap things up in this thread. My primary goal was to find out what scanner illumination yields better results, narrowband sampling via three differently colored LEDs or wideband sampling via an appropriate whitelight LED.

Remember, these are simulations (which might be wrong) based on old data sheets (where I am not sure I am interpreting the data correctly, or even sure that the data is correct).

Nevertheless, this is what I did:

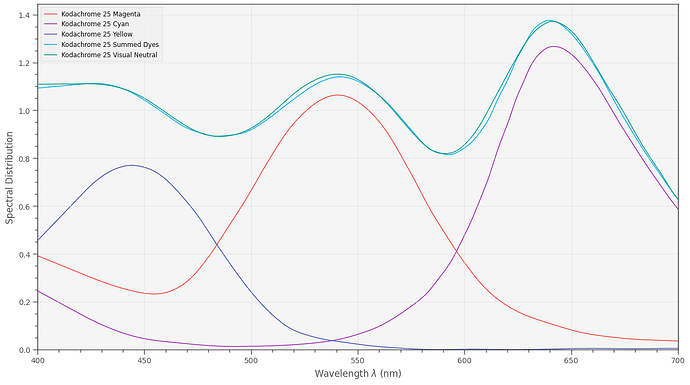

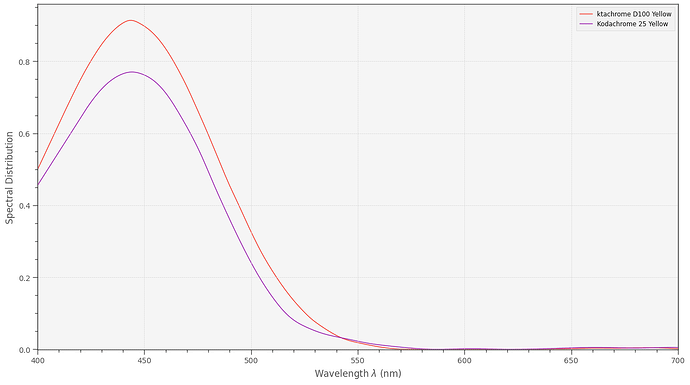

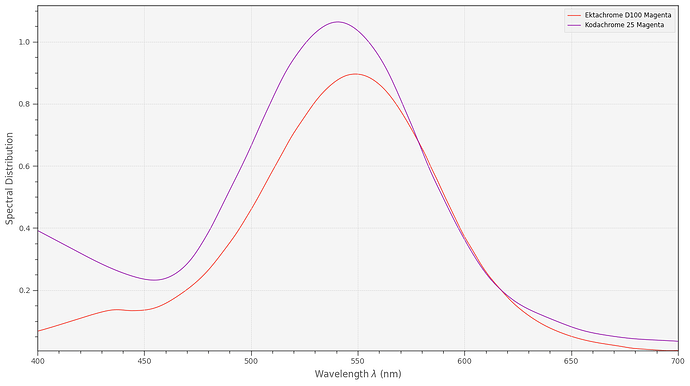

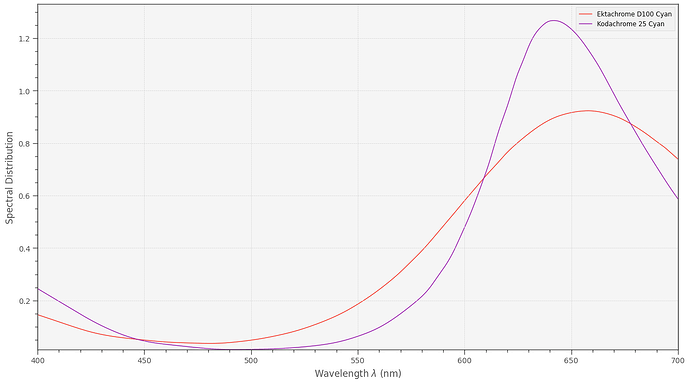

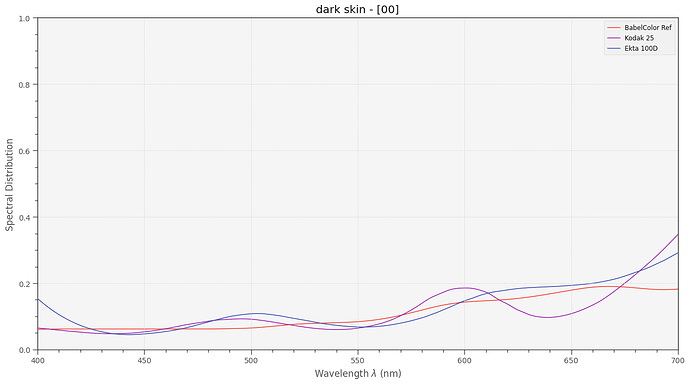

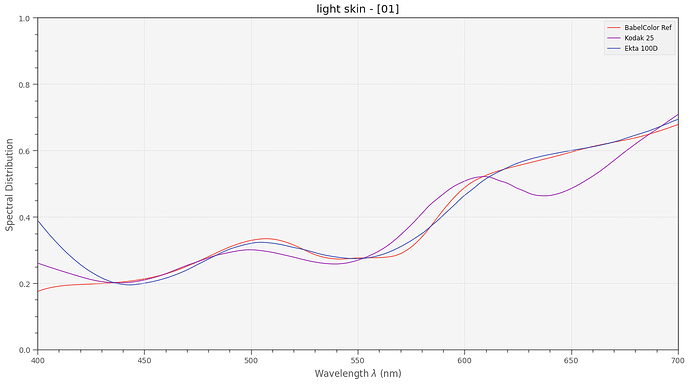

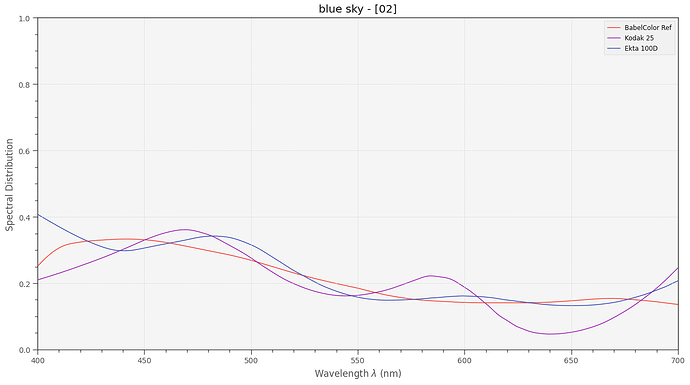

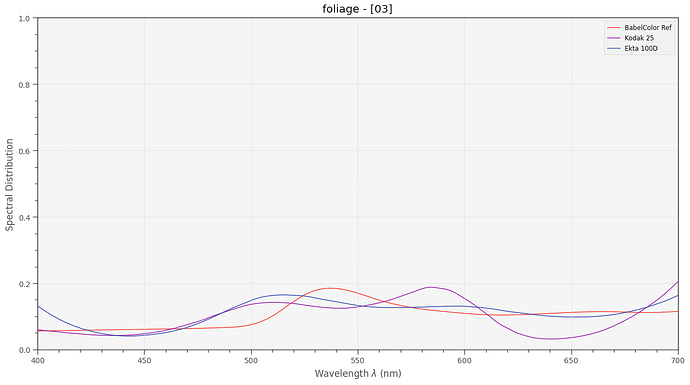

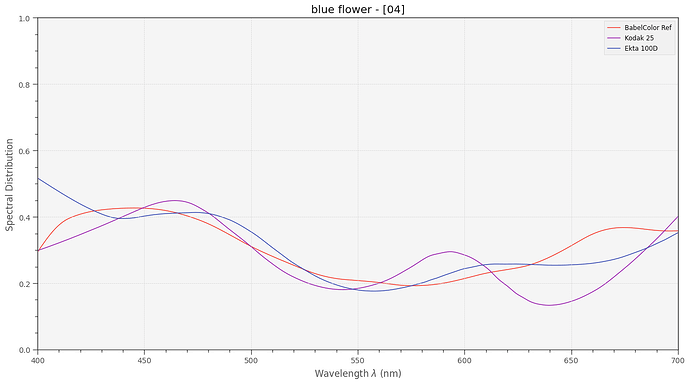

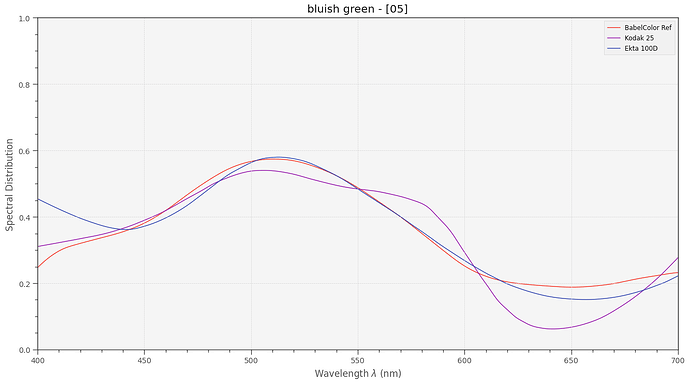

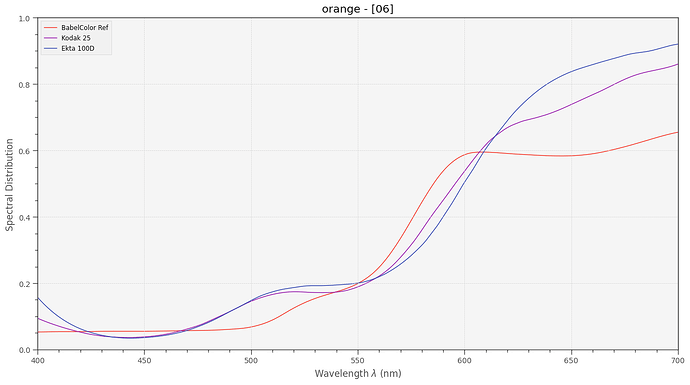

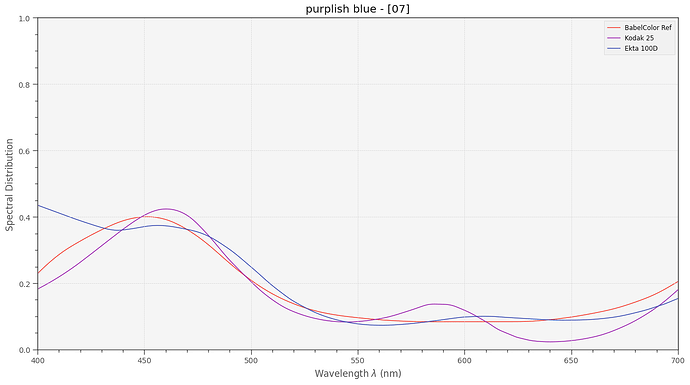

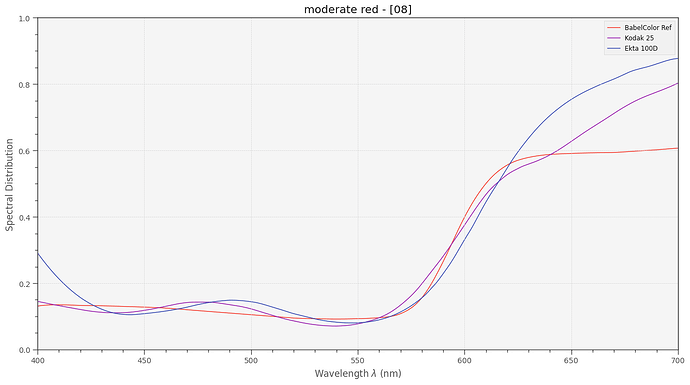

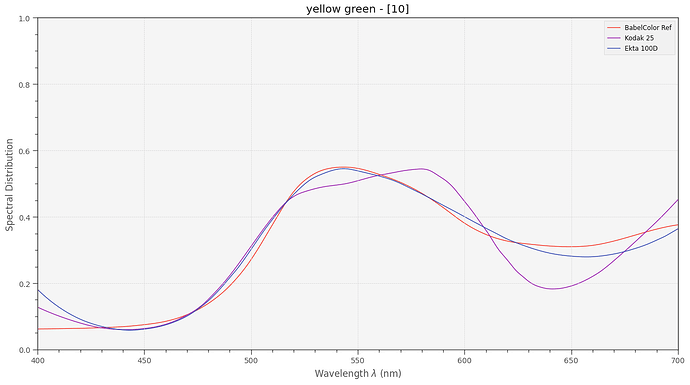

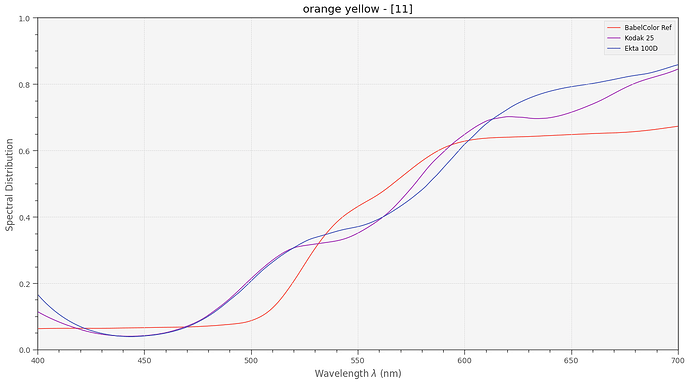

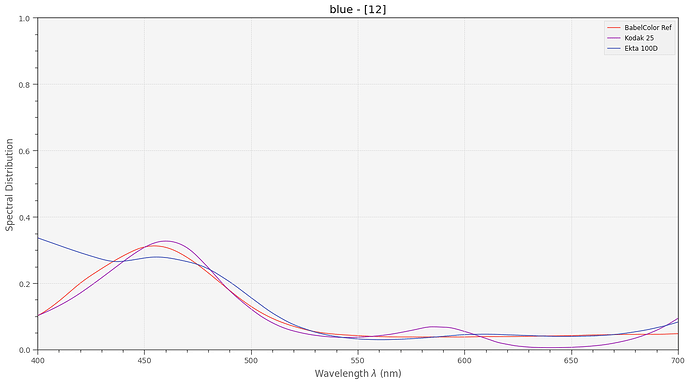

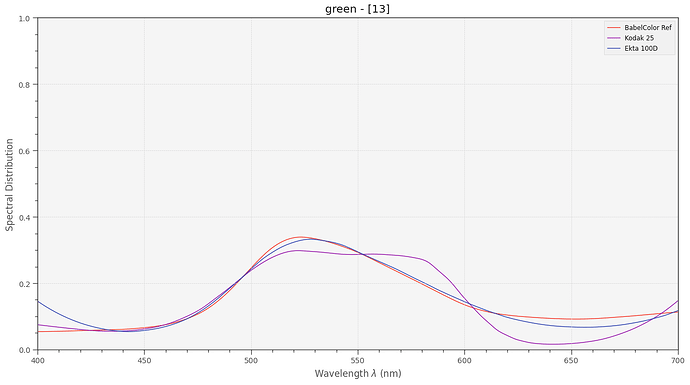

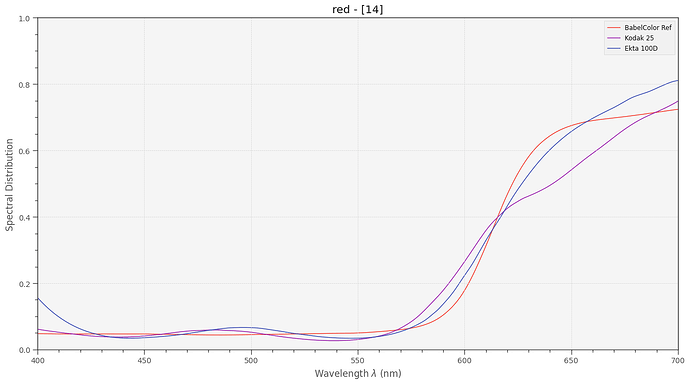

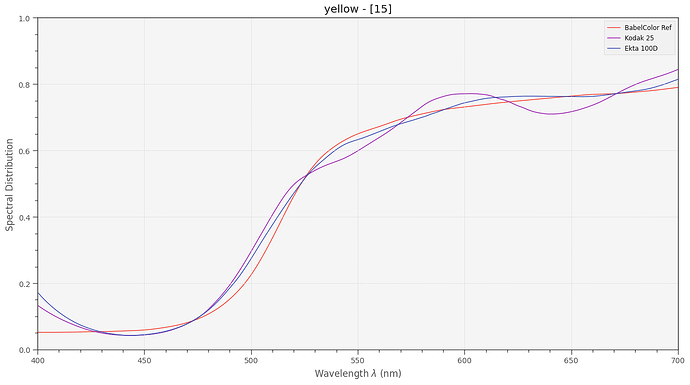

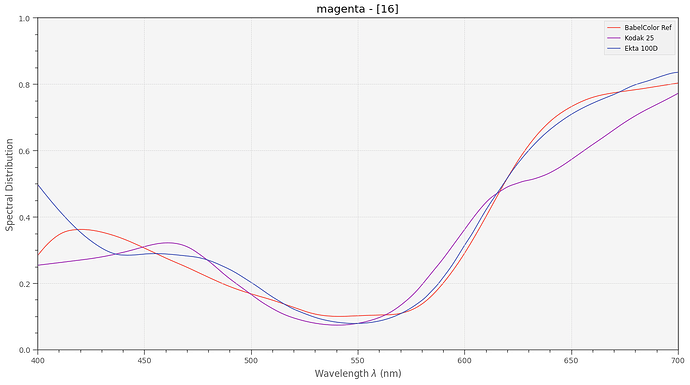

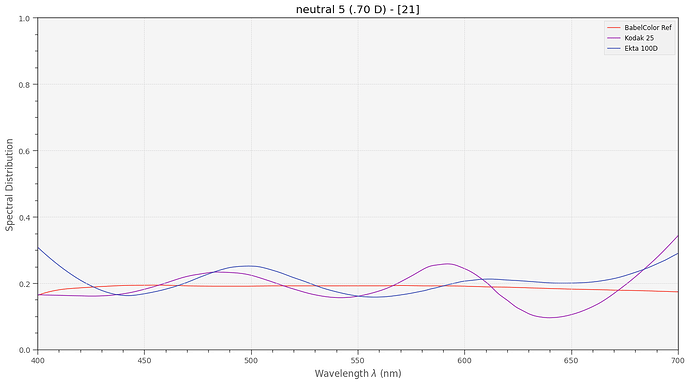

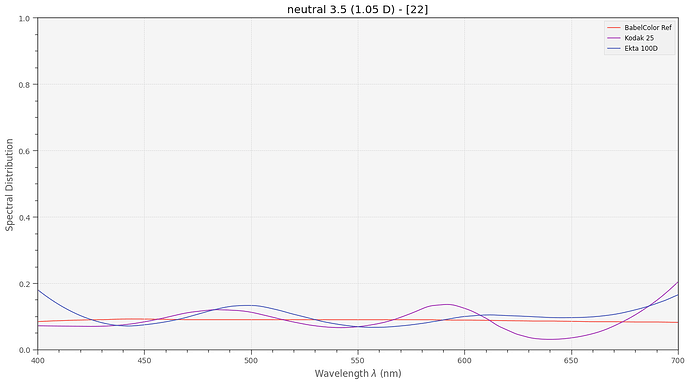

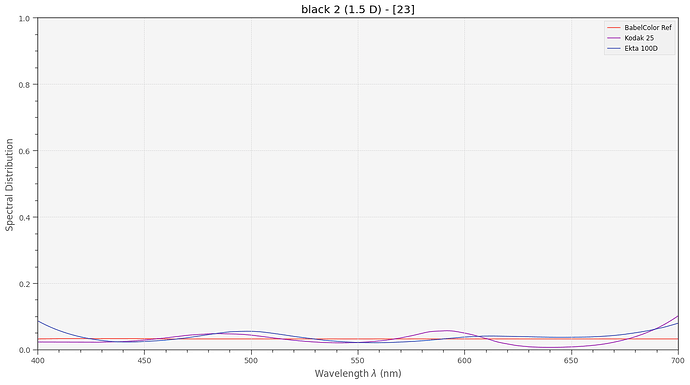

- Created a virtual color chart based on printed dye spectra for reference (“Ref”).

- Created a second virtual color chart with the same appearance when viewed in D65 illumination, but using only the spectral characteristics of Kodakchrome 25 film (“Kodak”).

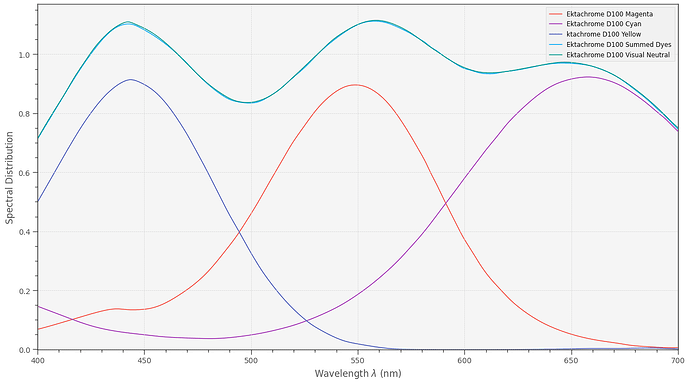

- Created a third virtual color chart, this time using the spectral characteristics of Ektachrome 100 D (“Ekta”).

- Created a virtual camera simulating capture of the color charts with a Raspberry Pi HQ sensor.

- Computed the difference of what the camera recorded in comparision to the reference color chart under various different illumination setups.

With respect to point 4., that is, the virtual camera, I implemented basically two different functionalities of the camera:

- Operating the camera via libcamera/picamera2. That means:

- Estimate the appropriate whitebalance from the illumination spectra. That is: adjusting the red and blue gains to the illumination. Could be done also manually, does not matter too much.

- Reading in the information from the scientific tuning file.

- Estimating a correlated color temperature (cct) from the given red and blue channel gains.

- Using that color temperature to calculate a CCM transformation matrix from the ones stored in the scientific tuning file.

- Using that CCM to compute the colors “seen” by the camera.

- Alternatively, using an optimized CCM for computing the resultant colors, in effect bypassing libcamera (similar to raw captures). The optimized CCM was derived from either the virtual Kodakchrome 25 or the virtual Ektachrome 100D color chart:

- Taking an image of the color chart used for calibration under a defined illumination.

- Run an optimizing scheme trying to come up with a transformation matrix mapping as closely as possible the resulting colors onto the reference target. This is the most simple direct CCM approach possible; more advanced software includes the possibility of 1D or even 3D LUTs to be thrown into the processing path - I have not implemented these more advanced approaches.

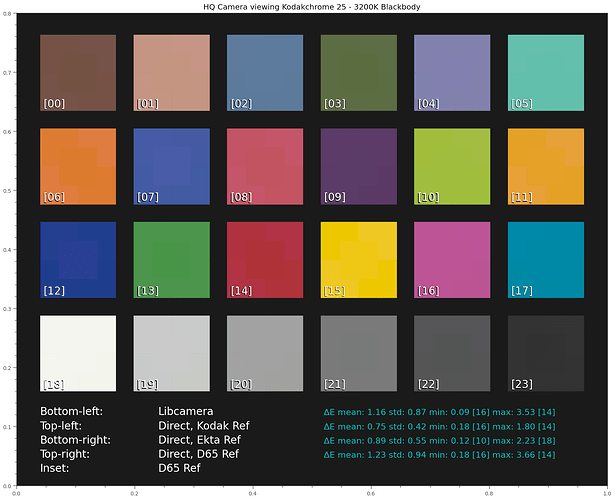

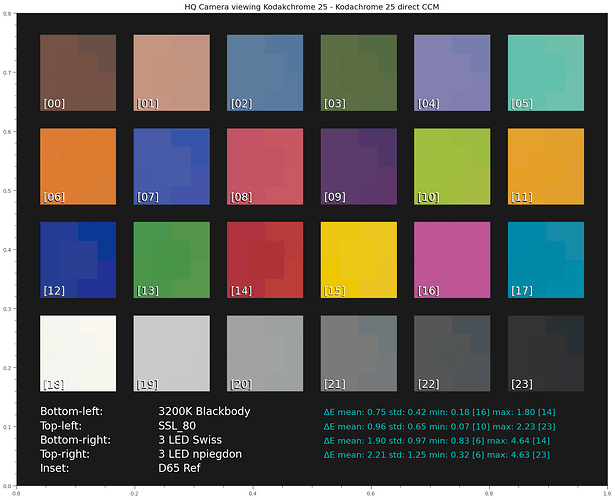

Here’s the simulation result using a blackbody radiator with 3200K (see the last plot of this post for the illumination spectra used here) with the various cameras implemented:

I use here the blackbody radiator with 3200K as illumination. This should be quite similar to the Tungsten lamp used when projecting S8-footage in the old days.

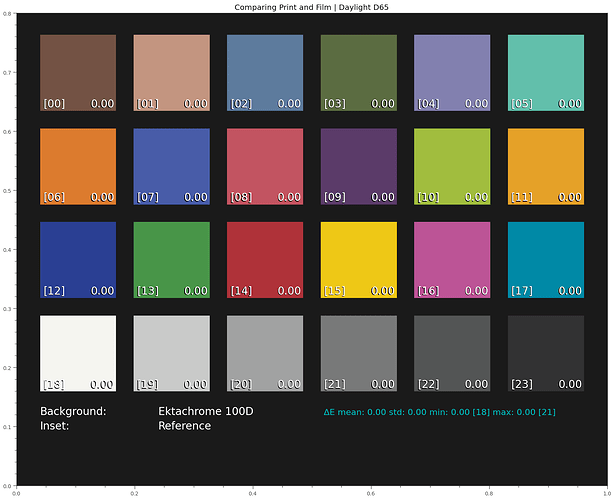

Some explanation on how to read this display is appropriate here. Overall, it shows how the patches of a virtual color chart would be recorded by our virtual camera. For each patch, the central area (“Inset”) shows how the reference color chart would be seen under D65 illumination. Around that center patch, the segments show the results of various simulation runs.

Specifically, this chart shows the appearance of the Kodachrome 25 based color chart illuminated with a 3200K blackbody radiator, for various different processing schemes:

- libcamera: the CCM is computed by libcamera/picamer2, as described above.

- Direct, Kodak Ref: the CCM used was optimized with the Kodachrome 25 color chart

- Direct, Ekta Ref: the CCM used was optimized with the color chart realized with Ektachrome 100D dyes.

- Direct, D65 Ref: the CCM was computed based on the color chart using print dyes, not film dyes.

Visually, it seems hard to notice any differences with respect to the different processing schemes. All simulated “cameras” performed quite well.

The data values listed besides each entry give a more quantitative information:

- ΔE mean - is the average color error of the set.

- ΔE std - is the standard deviation encountered.

- ΔE min - is the minimal error found.

- ΔE max - is the maximal error found.

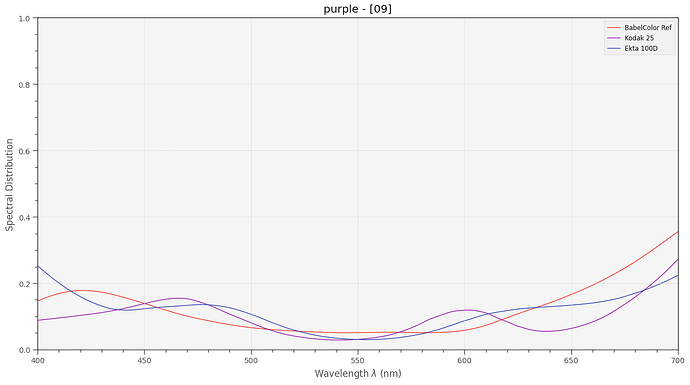

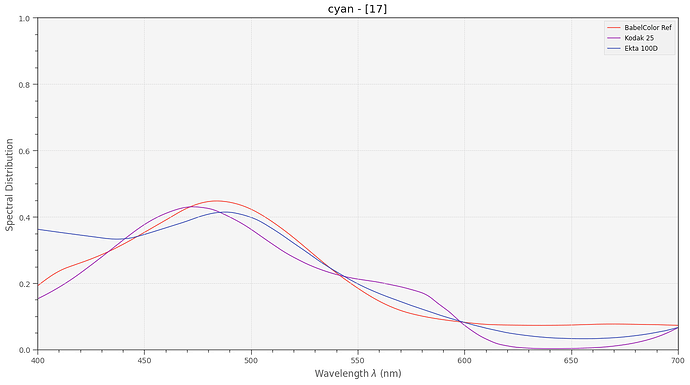

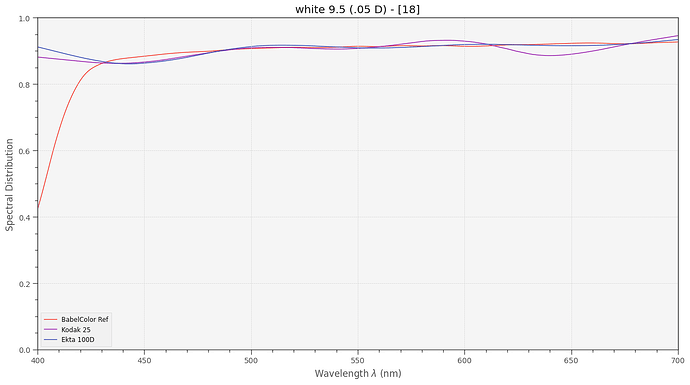

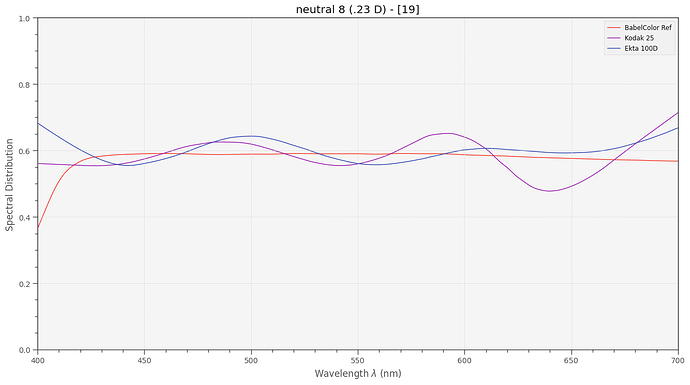

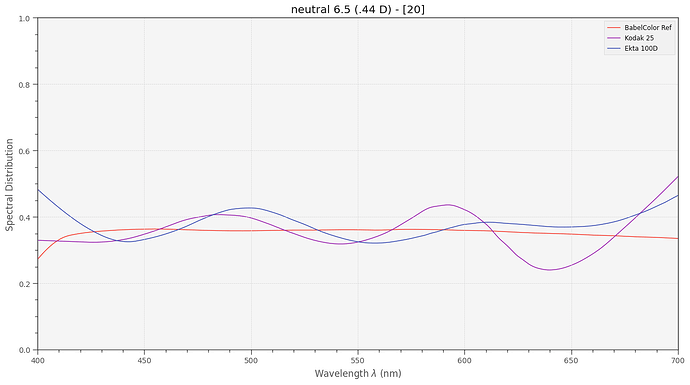

For the min and max-entries, the patch found is indicated by the number in [..].

The best performance on a Kodachrome target is obtained with the direct method (calibrated CCM) using the Kodachrome color chart for calibration. Not too surprising, actually. Using instead the Ektachrome chart as calibration reference, we see a slightly larger ΔE = 0.89. An even larger one, ΔE = 1.23 results when using the color chart based on print dyes. So this set of simulations shows that the dyes used in the calibration chart used does matter. Remeber, the “Ref” is using print dyes, “Kodak” is using Kodachrome dyes and “Ekta” is using Ektachrome dyes. Ideally, one should use the dyes of the material which is planned to be scanned for calibration.

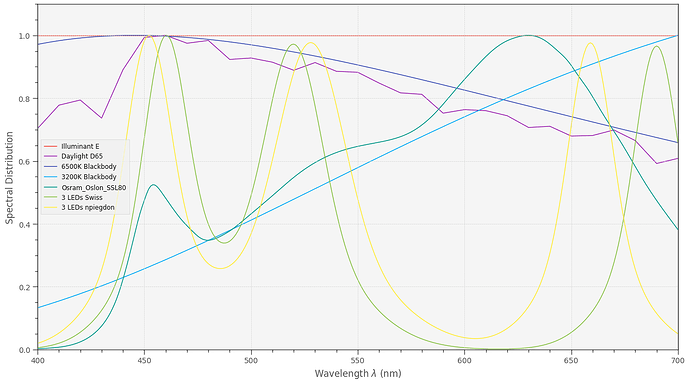

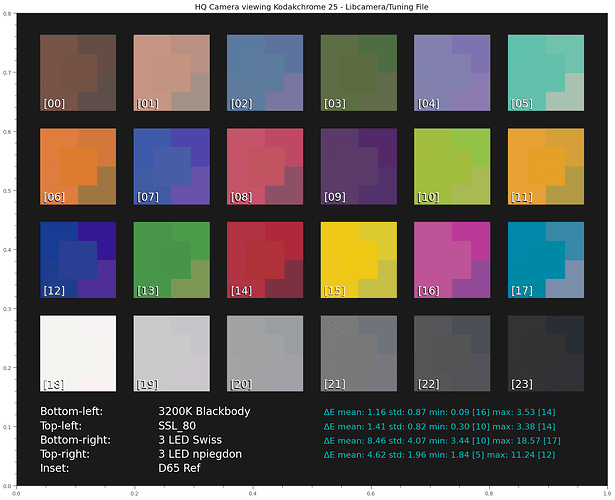

Ok, next. The following chart examines the influence of different illuminations under the standard libcamera/picamera2 processing, using the scientific tuning file. The various illuminations are used to estimate a correlated color temperature and this color temperature is in turn used to compute the CCM transformation matrix for the camera. Specifically, the following illumination have been tested:

3200K Blackbody: Estimated cct: 3200.0

SSL_80: Estimated cct: 3146.93236375

3 LED Swiss: Estimated cct: 7772.82512135

3 LED npiegdon: Estimated cct: 7403.21605312

One can already see that the estimated color temperatures of the three LED setups are way off. In fact, narrowband LED illumination does not really have a correlated color temperature (cct).

Anyway - let’s see the simulation results:

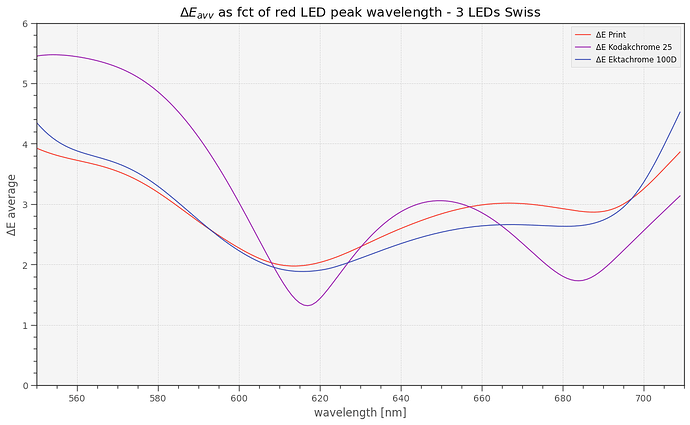

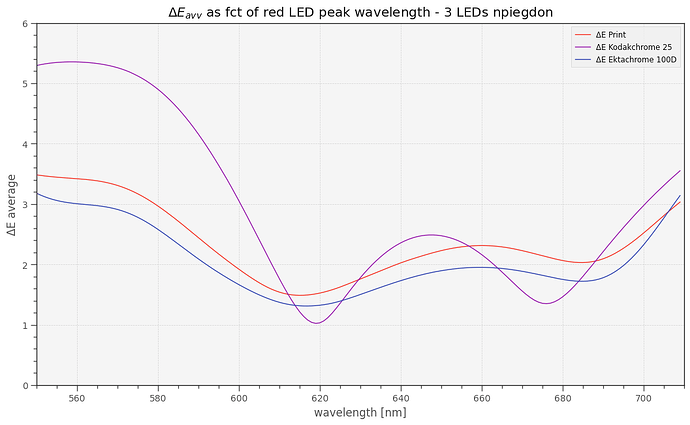

Yep, the results are as expected. Both 3 LED setups show quite noticable deviations in color appearance compared to the 3200K blackbody illumination. The main difference between “Swiss” and “npiegdon” is the position of the red LED - obviously, the placement of the narrowband LEDs within the spectrum has an influence upon the color fidelity. The later has only half of the color error than the Swiss one.

Note that the modern day bulb substitute, the “SSL_80” broadband LED, even yields better results than our reference illumination “3200K blackbody” - however, the difference is tiny. The overall simplicity of our simulation approach does not allow us to draw any conclusions from such small deviations.

Ok. So pairing narrowband LED illumination with standard libcamera/picamera2 processing is probably not such a good idea. Note that when working with .dng-files, your raw converter will use the CCM computed by libcamera by default - that is a result of the way picamera2 creates the .dng-file.

Well, we can do better if we compute an optimized CCM based on a previous calibration run.

Here are the results of calibrating with the film dye based Kodachrome 25 color chart

under various illuminations. All ΔE improved - as expected.

Note that a calibration using only a CCM is a rather simple approach - usually, there would be either 1D LUTs or even 3D LUTs thrown into the calibration recipe here. However, I neither do have software available for calculating LUTs, nor is a simple 24 patch color checker sufficient to guess the many values required for a LUT. So that’s all I can offer in terms of calibration-based CCMs.

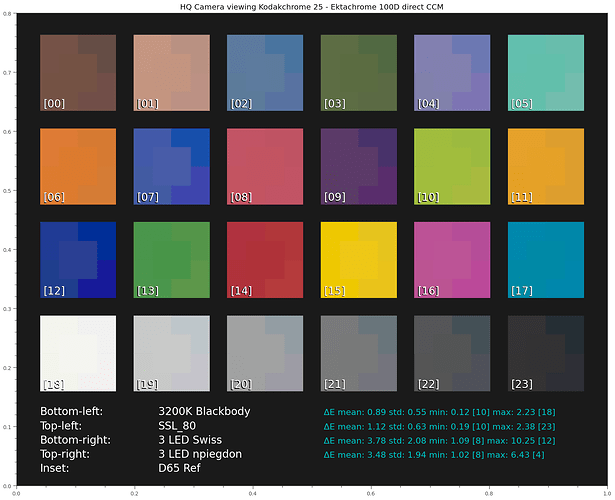

Ok, lastly, let’s check what happens if we do not base our calibration on a simulated Kodachrome 25 color chart (which uses the same dyes as the scan), but a simulated Ektachrome 100D (which uses different dyes).

Well, here’s the simulation result:

As expected, the ΔE get slightly worse. Since we are using the wrong color chart (“Ekta”) with dyes different from the media we actually scanning (“Kodak”), this is not really a surprise. But the drop in fidelity is not as big as I expected.

Summarizing what I have learned by this journey:

- The combination of a modern broadband whitelight LED with libcamera/picamera2 processing based on the scientifc tuning file should yield quite acceptable color fidelity.

- Using narrowband 3 LED based illumination with a calibration yields comparable results - even if the calibration target is not identical to the film stock scanned.

- The results of narrowband 3 LED illuminations seem to be extremely sensitive to the wavelengths of the LEDs used.