Quick update

Put the testing of the steppers on a brief hold. Got stuck with an issue (looks like a PICO SDK getchar bug, more info in the post). The serial USB communication would hang randomly with a choke character in the PICO.

After a lot of testing, and a better code with guardrails, the workaround was to use getchar_timeout_us using a zero argument.

Currently implementing a simple command protocol / parser for the serial-USB communication for the PICO, which would simplify changing parameters for the steppers/transport for continue testing what now appear to be inherent eccentricity issues of the geared steppers.

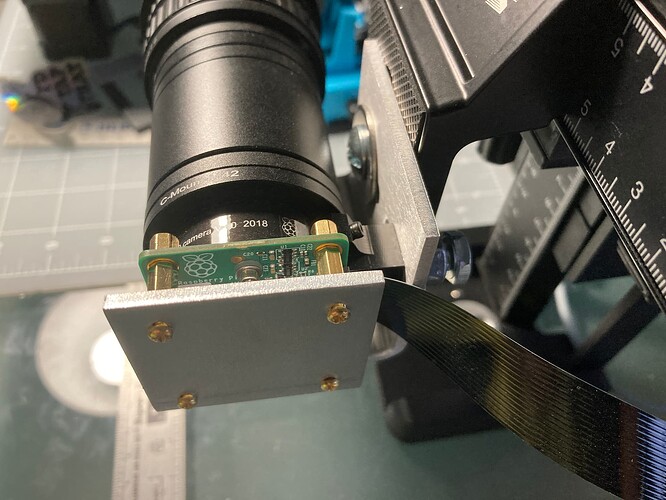

It is always nice when reality imitates cad… the HQ mounting piece arrived, nice 3mm aluminum, and a big improvement to the stability of the lens/sensor.

The back mount uses small slots, so the assembly is adjusted to have the T42 extension nicely resting on the bottom plate.

It reduces the strain and arm of the lens on the HQ plastic mount.

The sensor is held with 2.5mm (10mm long) stands to the back, and also with its 1/4 inch screw to the side. The mount is now attached to the sliders with the forward mounting screw, a real improvement to mounting from the plastic lens mount of the HQ.

This was worth the price of the laser cutting/bending… the piece was US$14 (not including shipping/taxes/minimum order).

Stay tuned.